Defender Spotlight Erik Heuser

Interviewer: Can you tell us about a favorite incident that you responded to?

Erik: The most memorable engagements I’ve had were from the 2010 – 2015 timeframe, there was a significant amount of non-subtle nation-state activity. It was right around the time when we started using full-packet capture technology and EDR’s. It was like the lights came on and there were the roaches. Predictably, they adapted and began using stealthier tactics, it was an arms race and it set up some pretty interesting encounters.

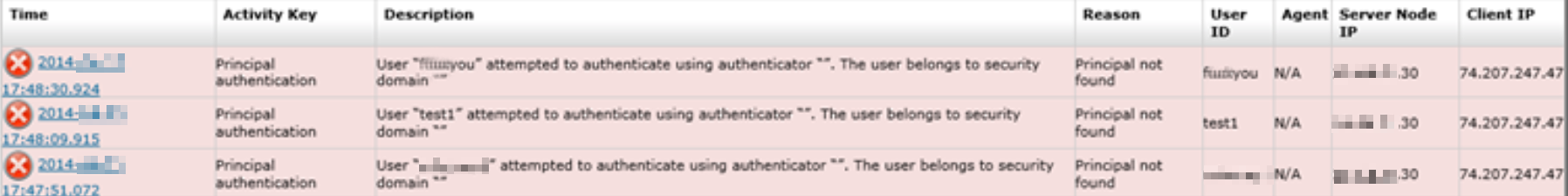

One engagement used beaconing malware delivered by XSS with Java zero-day payloads and credential phishing. After the initial foothold was secured, they installed backdoors with no entrenchment/persistence mechanism scattered throughout the environment. These implants were instead activated by scheduled tasks sent from an Outlook Web Access (OWA) China-Chopper web shell; a one-liner shell that executed any code sent to it. They had VPN access (no 2FA) as well as web shells on internal and the external OWA servers. By the time we got there, they were in the sustainment phase and were coming back every 2-3 weeks to read the browsing history of several engineers, check the status of their projects and then exfil the data. The client was great to work with and let us set up monitoring and even some canaries.

Remediation day comes and the plan developed over the previous weeks is executed without a single issue. About a week later they came back and tried the web shell which was replaced with a netcat listener that was visible to our full-packet capture solution. Presumably, they tried their beaconing malware, but it was gone. They attempted to use the VPN, but we had added 2FA. They didn’t like that.

After that they discovered the client hosted their own message boards so their customers could interact with the engineers directly. They began sending malware attachments with broken English instructions to execute the payload. When that didn’t work, they used the same XSS vulnerability on the client’s main website that initially landed them a foothold to send a Python reverse shell to an internal web server with a web shell. We had already found that server and the web shell and replaced it with yet another netcat listener in front of a full-packet capture solution. We were watching it all in real-time since we had some of the best instrumentation available.

We were having a lot more fun than they were, but it got to the point where we ended up “interacting” with their malware and phishing lures to get them to go away. They were working around the clock for days at a time, then a break, and then another burst of activity. When they sent credential phishing emails, we’d intercept and send our own “customized” username and passwords that conveyed our displeasure with their activities and the fact that we knew what they were doing. There was quite a lot of the digital equivalent of hand-to-hand combat and it was one of the most challenging and fun engagements I’ve worked. They were a very persistent threat, and it validated the remediation efforts and the need for deeper visibility during and after the event.

Interviewer: Have you ever had an incident go according to plan?

Erik: I’ve seen both sides and everything in-between. When you have cooperation from the stakeholders, access, visibility and authority it usually goes smoothly. Problems arise when you encounter factionalism, fiefdoms and even some very odd conglomerates that act like separate companies…sometimes. This is all from the perspective of the external incident response firm. Being an internal responder during a complex targeted intrusion will necessarily involve office politics and will tend to be more challenging.

Interviewer: How do you communicate with stakeholders and affected parties throughout the incident response process?

Erik: Being an external firm, it all depended on the “who” and the “what”. Who were we dealing with and what was their level of capability? What type of adversary were they dealing with and how quiet did we have to be? Could we use email? VOIP? We were once provided with a Citrix VDI for access and after deploying the EDR agents we found that it had a service implant entrenched and beaconing. That wasn’t paranoia, that was respecting the capabilities of several of the active groups, at the time.

Interviewer: What has not worked well when communicating during an incident?

Erik: Using hotel WiFi to connect to an intelligence conference call on another continent without a VPN, as the Germans recently discovered. I’m not saying that you need to entertain every Hollywood scenario, but I have seen an employee loudly declare “We’ve been hacked, so we’re going to all need to change our passwords…” to a conference call full of the victim organization’s customers. Controlling who knows what and how they talk about it can save you some serious headaches if what you’re seeing is a real problem.

Interviewer: Are there common issues you see during the remediation phase?

Erik: Acting without a coherent plan and doing it piecemeal. I’ve seen incidents spiral out of control when on-the-spot remediations are performed without understanding the full extent of the compromise. It forces you into a reactive position and your side (us defenders) is now at a disadvantage. Adversaries will usually be able to act faster than you can detect and understand what they’re doing. This gets more pronounced as you scale the size of the network.

I’m not advocating analysis-paralysis, every situation is different and there are a near-infinite number of ways they can unfold. I’m saying if an action is going to be taken, the reaction to it shouldn’t be “and then what?”.

Interviewer: What advice would you offer to other incident responders based on your experience?

Erik: Examine your own analysis biases and look for ways to automate them. Use the extra time to dig deeper and become better. This job will always teach you something, or at least present the opportunity.

Interviewer: Are there any specific tools, resources, or training programs that you found particularly valuable?

Erik: Opensecuritytraining.info will always have a special place in my heart along with IDA Pro and various other malware analysis books and PDF’s I’ve read over the years. I suppose that’s what sent me down the rabbit hole that ended with BIRT. I wanted a highly integrated application that could ingest artifact files from endpoints and give me insights in minutes. Products like Velociraptor or KAPE are great, but they didn’t go far enough, in my opinion.

Artifact retrieval and parsing are a very good starting point. It’s very nice that all the artifact parsers are all in one tool, but now what? A lot of the knowledge in IR is tribal, meaning it’s passed via direct experience and from working with other seasoned IR professionals. The MITRE ATT&CK matrix is a very good resource for describing the “how”, even if the behaviors are sometimes very general and don’t make good “signatures”

BIRT takes the next few steps, reconstructing the endpoint while applying Micro-Techniques, these are specific implementations of Sub-Techniques that gather events as evidence and contextual data, as well as managing the investigation itself. I’ve been on many engagements where it’s complex enough that one or two investigators are fully engaged managing the master timeline and trying to make sense of what the actors are really after. That’s a lot of manual effort and money that could be automated/saved. So BIRT manages and automates these portions of the Incident Response process, leaving you with the fun stuff (in this man’s opinion).

You still have the master timeline, which can be searched with a cardinality index viewer, and you have a view of all the collected evidence which has been logically organized and labeled and is oftentimes several orders of magnitude less data. There are dozens of specialized tools and features of BIRT that allow an investigator to quickly carve through millions of events per endpoint. When you finally come up for air, the Investigation is organized in timelines and hierarchy trees giving a 10k foot view of what’s happening. I see it as a step-change in Incident Response, I hope others will, as well.

Interviewer: How do you prioritize continuous improvement in incident response capabilities?

Erik: I develop BIRT. I’ll gladly take feedback and improve the product. The Micro-Technique Engine in BIRT was developed so that you could accurately express any scenario possible on an endpoint, without exception. If you find a scenario that isn’t possible to match or overly difficult to express, let me know, I’d consider that a bug.

I’d also like to give a shout-out to https://thedfirreport.com. One of the major gripes I’ve had with whitepapers released by vendors is the lack of detail on actions taken by the actor. The focus is on the initial lure, entrenchment and usually some reverse engineering of the implant. Then they’ll list some tools, IP’s, domains and call it a day. The DFIR report includes these items but also goes over timelines of actions the actor took in the network. That’s the interesting part, for me.

Interviewer: What would you like to see infosec vendors do?

Erik: When I worked at RSA I became a sort of liaison to engineering, offering suggestions and use cases. Sometimes I’d create synthetic data to help build new detection capabilities, or I’d directly create content like the RSA IR hunting content pack and RSA IR Hunting Guide. There needs to be more of this, and it needs to be faster. Practitioners should be looped into product development and engineers need to really listen to what they’re seeing in the field. There are so many products built for IR by people who’ve never been part of the process and it shows.

Related Blogs

Defender Spotlight Tyler Hudak

In this series, we highlight security professionals and their work behind the scenes. Questions are based on their experiences over their careers,...

Defender Spotlight Andy Jackman

In this series, we highlight security professionals and their work behind the scenes. Responses and opinions are based on their experiences over...

Defender Spotlight Aaron Shelmire

Interview with Aaron Shelmire, Chief Threat Research Officer & Co-FounderInterviewer: Can you tell us about a favorite incident that you...